The OpenAI API Pricing system has become a common topic of discussion among developers, startup founders, and tech enthusiasts who work in Canada. The pricing system remains unclear to you because you lack a complete understanding of its functioning. API pricing information serves as an essential resource for developing AI applications, chatbots, automation systems, and personalised workflow solutions.

The comprehensive guide provides all necessary information about OpenAI’s pricing system. The article will explain the pricing system details, together with methods to reduce expenses, specifically designed for users who generate traffic from Canada.

What Is OpenAI API Pricing?

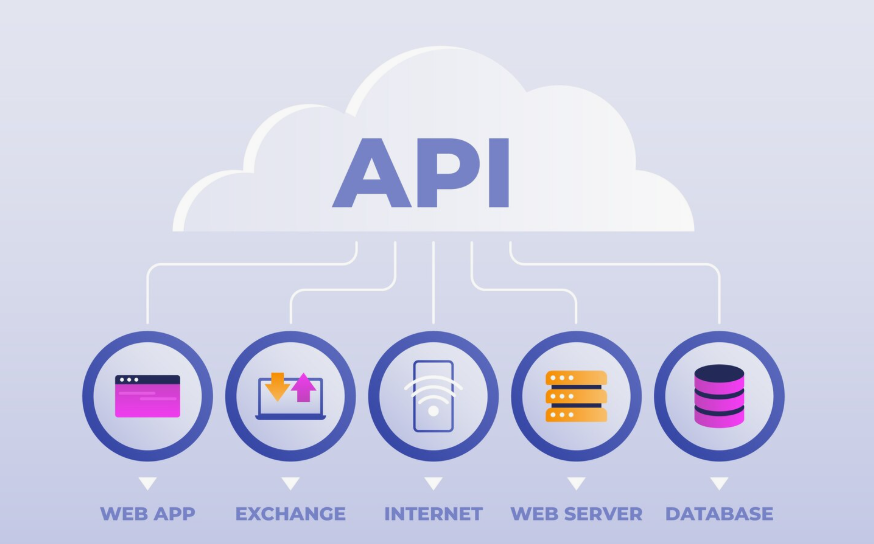

Developers who want to use OpenAI’s AI models through the API must pay fees that OpenAI establishes through its OpenAI API Pricing system. OpenAI charges customers according to their actual usage of its services instead of requiring them to pay a fixed monthly subscription fee. The charges for using the service depend on two factors: the number of tokens processed by the system and the selected model.

The pricing system provides essential advantages to Canadian startups and small business teams. Your organisation pays only for actual usage, which enables you to expand your operations without requiring substantial initial expenditures.

How OpenAI Charges: Tokens Explained

A complete understanding of OpenAI API Pricing requires knowledge about the definition of a token.

Tokens represent text units that can include word fragments, complete words, or punctuation marks. The phrase “Hello world!” breaks into several tokens. You will incur charges for both the input text that you send and the output text that the model returns to you.

Your expenses increase when you use more tokens. The system becomes expensive when users create long prompts together with extensive output material.

OpenAI API Pricing Overview (2026)

OpenAI offers a wide range of models, and each has its own pricing. These models differ in capability and cost, from powerful, large models to faster, cheaper mini versions.

Here’s a breakdown of the current API pricing as of 2026 (NOTE: prices are in USD per 1 million tokens):

Text & Chat Models

| Model | Input | Cached Input | Output |

|---|---|---|---|

| GPT‑5.1 | $1.25 | $0.125 | $10.00 |

| GPT‑5 mini | $0.25 | $0.025 | $2.00 |

| GPT‑5 nano | $0.05 | $0.005 | $0.40 |

| GPT‑4.1 | $2.00 | $0.50 | $8.00 |

| GPT‑4.1 mini | $0.40 | $0.10 | $1.60 |

| GPT‑4.1 nano | $0.10 | $0.025 | $0.40 |

| GPT‑realtime | $4.00 | $0.40 | $16.00 |

| GPT‑realtime‑mini | $0.60 | $0.06 | $2.40 |

Note: “Cached Input” refers to tokens that can be charged at a lower rate when repeated in the same context.

Openai Api Pricing: Prices in Model Scales

At OpenAI, models come at large, mini, and nano scales.

For tasks that require complex reasoning, the large models, referred to as GPT‑5.1, come with quite high capabilities.

Mini and nano models are those that were targeted for faster and cheaper behaviour. Therefore, their performance is all the more suitable for simpler jobs, like summarizing or making chatbots respond.

Real-time models are preferably made for lower-latency applications, such as live voice or interactive chats.

This flexibility means that developers from Canada can choose the right balance between performance and cost.

Image, Audio & Video API Pricing

OpenAI maintains APIs for multimedia tasks.

- Creation of images: A generation of images by composites such as GPT‑image‑1 and HP\image‑1-mini will cost around $8 to $40 per million tokens.

- Audio generation and speech-to-text: token-based charging with a slightly greater fee for a token is used for these services.

- Video APIs: Sora‑2 services, for instance, are charged per second of video generated.

As stated, the pricing for Canada-specific media types would be different for media, image or audio tasks.

How Much Do I Have to Shell Out, Though?

Let’s assume, for instance, you are developing a Canada-focused app that uses GPT-5 mini:

- Your prompt consists of 500 tokens.

- This is taken care of diligently by GPT-5 mini, considered to be a successor to GPT-3 with abilities like 1.5 times faster completion to give a result of 1500 tokens.

- Thus, the total would stand at 2000 tokens.

Cost (ballpark):

- Input: 500 × 0.00025 = 0.125

- Output: 1500 × 0.002 = 3.00

Your total will be about 3.125 for that call to the API.

Thereby, you are only paying for the real-time processing with no further time being involved.

Regional Pricing Influence

Nevertheless, there must be some higher regional processing charges in place for applications and servers in specific locations, such as Canada. So some regions could probably shoulder a bit more of the API fee due to the added data-processing fees.

In case you are deploying your services in Canada, it might be advisable to use regional endpoints for better latency characteristics and regulatory compliance of the Canadian data privacy laws; in addition, selection of local endpoints could beef up performance, allay delays, and retain trust with Canadian users.

Cost-Saving Strategies for Your OpenAI API Usage

Therefore, for avoiding excessively long responses from the API, ensure that these constraints are maintained. Also, setting appropriate token constraints will help reduce costs and maintain concise and relevant output.

1. Use Cached Tokens

Esteem repeated prompts, as any repeated context tokens incur lesser charges than all other tokens.

2. Opt for Mini or Nano Models

Are you working on rough tasks such as summarisation? Then, mini and nano models are a cheaper and faster alternative.

3. Batch API Calls

A cheaper way to go, especially when you use the batch API mantra of OpenAI.

4. Limit the Output Tokens

Therefore, keep these limits in place so that the API does not tend to produce very long responses. In addition, by setting sensible token restrictions, you can reduce costs and ensure that outputs remain concise and relevant.

How to Select the Best Model

Ultimately, the choice of the right model largely depends on its intended use. In addition, developers should carefully consider factors such as performance requirements, budget constraints, and expected outcomes before making a decision.

- For high-precision cognitive reasoning or code generation: Use more extensive models such as GPT‑5.1.

- GPT‑4.1 mini stands out for a proper balance of cost and performance for typical natural language tasks.

- When it comes to budget-seeking or low-latency applications, one may consider GPT‑5 nano or realtime-mini.

Moreover, it is usual for developers to test many new models under development in order to work out the best balance of high quality and cost.

Openai Api Pricing: Billing Practices

All transactions are billed in U. S. dollars; therefore, potential Canadian customers need to convert costs to Canadian dollars for budgeting purposes. Tracking credit usage may help in planning for expenses in the upcoming month.

Since OpenAI’s charges are based on usage and given the monthly billing system, controlling your token use does have the positive upshot of reducing your bill by a significant extent.

Openai Api Pricing: Real-World Considerations for Canada

Tech in the country is a hot field currently, particularly in cities such as Vancouver and Toronto, where there is demand for AI apps.

Additionally, any individual working with numerical analysis, chatbots, language translation, or creative assistant projects will therefore be at a significant advantage in 2026.” This will assist in meeting all these requirements. Examples are given, which might include beating peers at their own game, or deriving much speedier success when these technologies are shelved effectively. Properly deployed with good forethought and always continuously used, this is meant to assist both individuals and businesses alike to keep on improving and thereby reach for success for longer periods of time.

Mistakes to avoid rs

- Having the Use of the Most Robust Model for Everything

Not all tasks necessitate a top-tier model. So, choose less expensive models for less elaborate tasks.

- Please Remember to Cache Repeated Prompt

The token in context caching then avails gains in the order of 1 in the cost savings department.

- Ignoring Token Limits

Untoken limits would then allow the contextual output to flourish lifelessly and needlessly, incurring many a fee.

Final Thoughts

There are two levels of understanding OpenAI API Pricing that surpass figures. These are wise selections taken based on use cases, performance needs, and budgets.

For Canadian developers and businesses, properly utilising models can greatly reduce costs while offering leading AI capabilities.

Moreover, anybody dealing with numeric analytics, chatbots, language translation, or creative assistant projects will, therefore, be at a significant competitive advantage in 2026. In addition, by leveraging these tools effectively, they can outperform peers and achieve faster growth.